You head out after a long day at work to find a bird (or two!) has left a present on your car. Driving home, you hear one “splat!” after another on your windshield. The bugs are in full force tonight. Before ADAS technology, the solution here was pretty straightforward: wash, rinse, wax, repeat.

However, as members of the ADAS community, even tiny bugs are a big deal for us. Right now, some in our wider community are examining how insects, bird droppings, and debris in general impact sensor performance. Some ideas are being proposed on how to clean sensors on the move, while others focus on preventing the build-up of bugs and debris to begin with.

Dr. Andrew Baker-Campbell, head of the Sensors and Devices group at TTP, looks at how engineers are addressing this pesky problem of bugs and why sensor cleaning technology is so important.

My colleague and our resident expert on sensors for autonomous vehicles Tom Jellicoe (Consultant, Autonomous Driving Business Development for TTP), invited me along to AutoSens in Brussels last September. I enjoyed some great discussions with academics on intriguing new ways to fuse the disparate data from radar-based and optic systems or hardware developers using clever photonics tricks to miniaturize LiDAR. But amongst this intellectual stimulation, the most interesting conversations I had were on the challenges of integrating these sensors into real vehicles.

One such challenge is when a sensor’s view of the world becomes obscured by water or dirt. This was a hot topic during an excellent round-table hosted by Christof Buettner, Senior Product Line Director at Veoneer, a leader in automotive safety sensors and systems. I later had the opportunity to speak to Omer Keilaf, CEO of Innoviz Technologies, who summed up the challenge.

“It’s of importance to handle water or dirt on the sensor, but there is even higher importance of recognizing the obscureness as it happens,” he explained. “This seems to be a very challenging task for LiDARs which need to differentiate between a real object beyond the LiDAR, and elements that are right on the LiDAR window. Miss-detection would lead to a lack of availability, and false detection would lead to overuse of the cleaning system, which might become unavailable when really needed.”

Just Put It Behind The Windshield!

Why overcomplicate things? We human drivers have been surviving muck, rain, and insects for over a century of motoring. Just stick the sensors behind the windshield and use the wipers and water jets. As it turns out, this isn’t as straightforward as it seems. Sensors typically look at the world through a relatively small fixed lens. While a person can peer round a nasty insect splatter, a single bug splat could obscure the lens of a sensor.

“I find myself dedicating an unbelievable amount of time to thinking about these critters as my team at Ford helps make progress on its self-driving vehicle business,” said Venky Krishnan, Autonomous Vehicle Systems Core Supervisor, Ford Motor Company. “It turns out that insects pose a significant challenge to self-driving vehicles.”

To further complicate matters, each technology has a host of more specialized requirements. For example, thermal imaging cameras peer out at the world through silicon windows because glass is opaque at infrared frequencies they use. Requiring the windshield to be made from silicon is hardly compatible with the industry imperative to reduce the cost of autonomous vehicles.

LiDAR has its challenges too. When you are launching photons over hundreds of meters – desperate to try and capture the few that bounce back to spot that apocryphal child wearing dark clothes who has strayed onto a roadway – you do not want an aerodynamically raked windshield bouncing the returning light off in all directions.

The Empty Tank Problem

The tried and tested approach to automotive cleaning is liquid jets. It’s hard to overstate the appeal of an established technology developed to the exacting quality, durability, and cost specifications of the automotive industry. However, applying this approach to sensors brings new challenges in capacity, performance, and sustainability.

Due to autonomous driving systems comparably high cleaning requirements versus a human driver, cleaning fluid tank capacity is shaping up to be a significant issue. Early trials with Level 4 and 5 autonomous systems have shown multi-liter reservoirs depleted in as little as 400 miles.

“Are we in a situation where we are going to take the gasoline tank from a car and replace it with a windshield washer reservoir to keep these things clean,” surmised Jim Schwyn, Chief Technical Officer of Valeo North America.

The Performance Problem

There are issues with performance too. Think about the windshield on your car. Insects, tree sap, or (worst of all) bird droppings often leave a residue – and that is with the benefit of a wiper. However, including a wiper on every sensor is unacceptable to car designers because the sensors often have optical coatings that could be abraded by mechanical cleaning. One approach is to use more aggressive cleaning chemicals, which brings us neatly onto considerations of the environment, which is ultimately where the washer fluid ends up.

“We are continuing to push ourselves to clean the various sensors with a fraction of the fluid that traditional nozzle technology consumes,” said Russell Hester, Director of Business Development at dlhBOWLES, a leader in automotive fluid management systems.

Hester says the company has one particular application that reduces fluid consumption by 30 percent without compromising cleaning performance. “So, either the OEM gets that much more duration out of the same bottle volume, or if they want, they cut the bottle volumes by that amount and save space and weight,” he continued.

New Technology Can Address Sensor Cleaning

Cards on the table: as a technology consultant, I am not exactly downbeat about the idea of a thorny real-world problem in need of inventive solutions. And there is undoubtedly market pull; BMW put out a call last year on the popular open innovation platform Ninesigma requesting novel cleaning technologies that either reduced or eliminated the use of fluids.

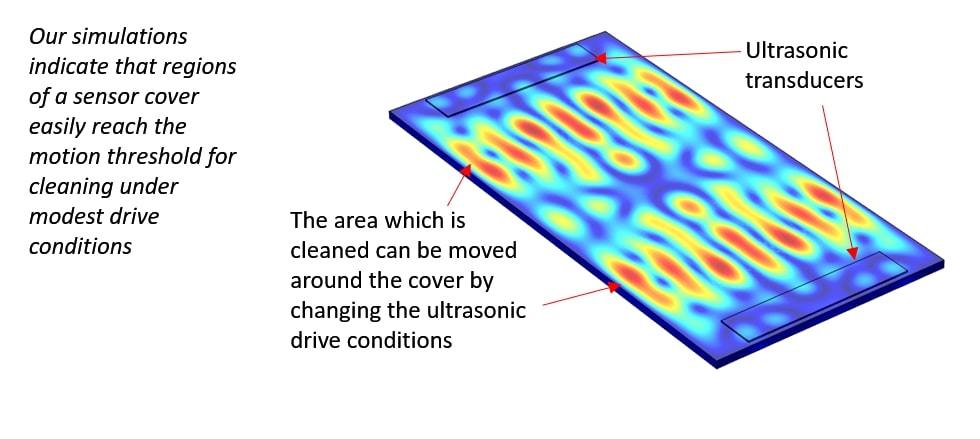

You can split novel approaches into two broad categories; those that clean a surface and those that prevent it from getting dirty in the first place. Among novel cleaning approaches, lens agitation caught our eye’s here at TTP. The agitation of cleaning fluid at ultrasonic frequencies (tens of thousands of tiny displacements every second) is a well-known way of breaking up dirt (and kidney stones). Rather than blasting the dirt off with a pressurized jet of water, a thin film of fluid is applied. The vibration of the lens transfers the dirt into the fluid, and then atomizes the fluid from the surface, with the dirt in tow.

“Ultrasonic PZT actuators are used in a range of familiar products from room fragrance dispensers to parking sensors, so their application here shouldn’t be too controversial,” said Jellicoe. “The specific challenge with sensor cleaning is to determine how hard the actuator needs to be driven for effective cleaning and whether this is going to drain the battery or shake itself to pieces before the vehicle has left your drive.”

Atomization of liquid requires the surface to move fast enough to break the surface tension. Finite element analysis of a typical lens geometry suggests this can be achieved with the sort of actuator you could find in a doorbell buzzer. So, while this is a long way from a real-world demonstration of effective cleaning, there are reasons to be optimistic that technology could reduce the use of cleaning fluid.

Air Screens: An In-Depth Look

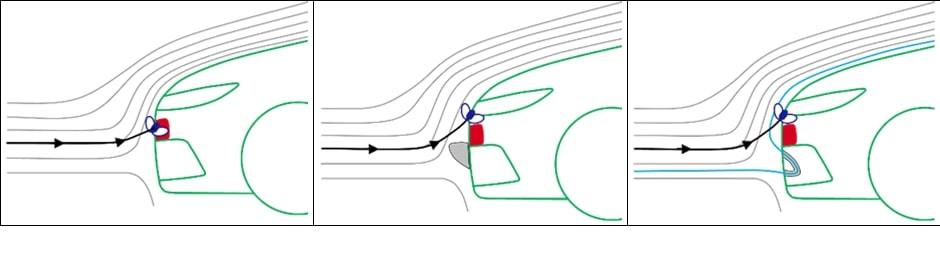

If cleaning grime off a sensor is hard, why not prevent it from landing in the first place? Is this possible to achieve? Let’s consider the following airflow graphic.

The figure above shows three different scenarios for the flow of air around a sensor. In these diagrams, the direction of the airflow is represented by streamlines. Small debris, like dust particles, will tend to follow the streamlines. Larger debris, such as water droplets or bugs, will try to follow the streamlines, but when the air encounters a near-vertical surface and is forced to change direction abruptly, the debris has enough inertia to break free from the streamlines and impact the sensor.

This is the scenario shown in the left panel.

So, what can be done? In the middle panel, an aerodynamic feature (gray) is placed upstream of the sensor. This feature diverts the flow away from the sensor leading to most of the debris landing on a less sensitive area of the vehicle. However, the addition of the aerodynamic feature adds bulk and reduces the sensor’s field of view. The right panel shows the air jet’s use to effect a similar change to the flow path.

An aerodynamic duct in the bodywork redirects oncoming air back into the flow (blue streamline), in effect pushing the bulk of the flow (and any entrained debris!) away from the sensor. While this approach is more complex, maintaining the sensor’s field of view and keeping a cleaner line on the car’s bumper is important for safety and aesthetics.

Sensor Cleaning Systems Under Development

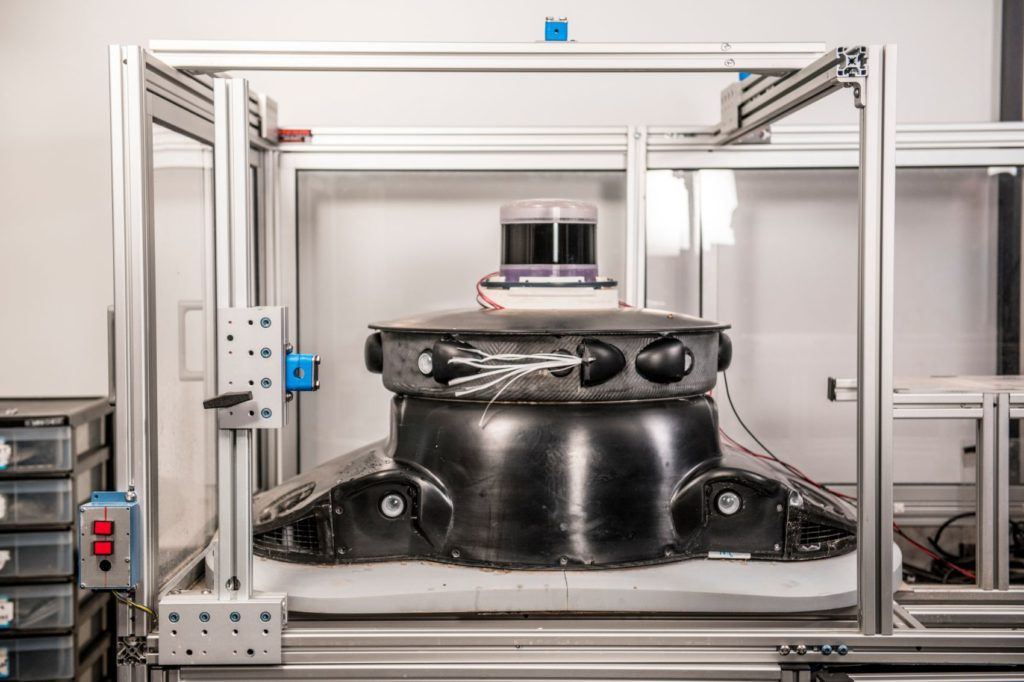

Ford has put these aerodynamic principles into practice. Ford’s ‘tiara’ sensor suite funnels air out through slots near the sensor lens, deflecting debris. “Anytime bugs are making a bee-line for a camera lens, the air flowing out of the tiara pushes it aside, so it doesn’t make contact with the lens,” Krishnan explained. “It’s like changing the course of an asteroid on a crash-course with Earth.”

Krishnan says they started with CFD simulations to try and model a wide range of environmental scenarios. “Then we progressed to the lab, but we quickly hit the limits,” he said. “It is difficult to simulate real-world environmental conditions, so we equipped test cars and drove through a forest full of bugs.”

According to Krishnan, the approach has been remarkably successful, deflecting the “vast majority” of bugs. Beyond that, however, OEMs and their suppliers are not revealing much else. “That is because it plays to safety,” Krishnan continued. “As you move from a private ownership model to autonomous ride-sharing, safety is going to replace styling in driving consumer behavior.”

In addition to choosing the correct cleaning solution, there is also a need to decide when best to apply it. Some companies have already started in hopes of being ahead of the curve.

“Our LiDAR, InnovizOne, was designed with a blockage algorithm that enables the sensor to recognize if there’s a loss in availability or degradation of performance due to loss of light passing through the obstacle,” Keilaf said. “Our unique sensor can classify the different types of blockage to use the right cleaning scheme, such as cleaning with water spray, air pressure, heating, etc. Finally, based on input coming from the vehicle, our sensor can decide whether to continue to function with limited availability or to enable the cleaning. Eventually, as in real life, the best decision the car can make is avoiding blockage whatsoever by using the right cleaning system.”

Where Will The Innovation Come From?

There is an emerging consensus that no single “magic bullet” sensor offers vehicles sufficient data to operate in all environmental conditions and safety scenarios. Similarly, none of the cleaning technologies on their own offer the high-reliability car companies require for Level 4 or 5 autonomy. I expect everything featured in this article will have a role to play. As much as the need for innovative new cleaning technology has been established, it is less obvious who will drive it.

“What remains to be determined is where in the supply chain the innovation will come from. It’s not a great skill fit for a typical sensor start-up, and the tier 1 automotive suppliers are faced with a chicken and egg situation,” Jellicoe explained. “They are nervous about spending a lot on development without substantial OEM buy-in but are unlikely to get buy-in until the OEMs can see what the solution looks like. Whoever takes the plunge and develops a self-cleaning product will have a useful point of differentiation in a competitive space.”