Rain and fog can make life difficult for the best drivers; ask any F1 racer. But it isn’t just humans who find adverse weather conditions difficult. Dr. Andrew Baker-Campbell looks at how they affect automotive LiDAR systems.

At TTP, we have been working on ways of keeping LiDAR sensors clear of the dirt and grime they pick up in real-world driving conditions. This work, and the persistent drizzle in the UK, led me to wonder how different LiDAR systems cope when it rains. After all, LiDAR operates by bouncing pulses of IR light off its surroundings, and water is known as a good IR absorber. A little digging raised another important question: is this something that affects all LiDAR systems equally?

How Does Water Affect LiDAR?

Some of the most common difficult driving conditions are heavy rain or thick fog. So, I asked around to better understand how they affect LiDAR.

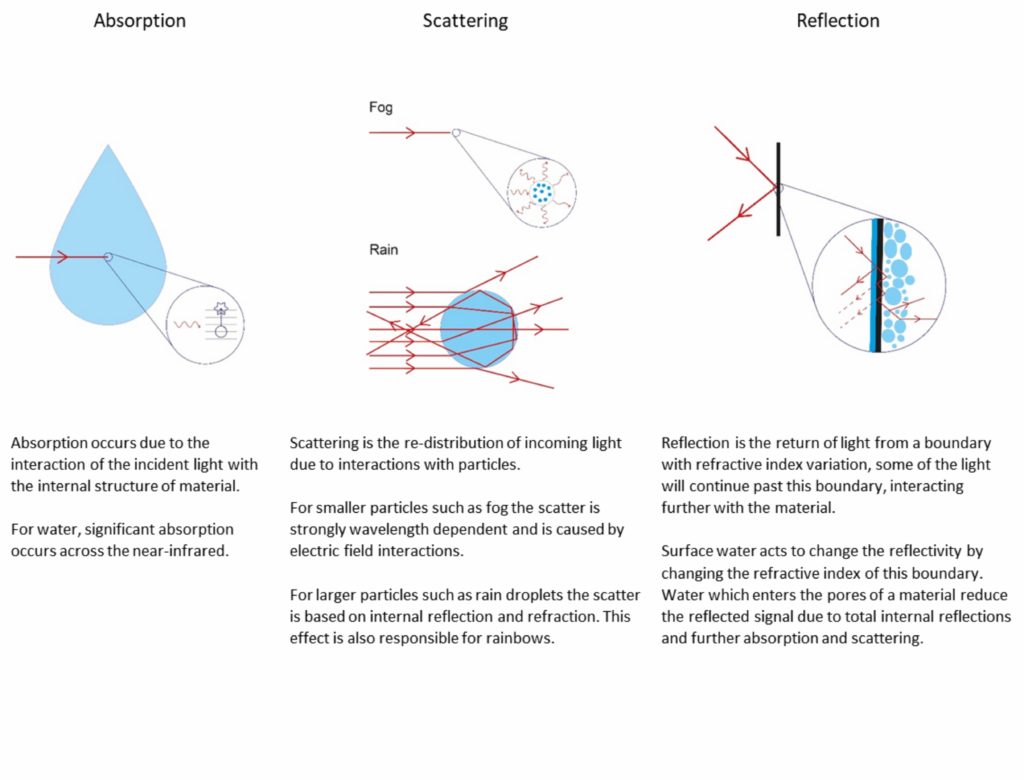

“There are three key mechanisms that impact the performance of a LiDAR system in wet conditions,” explained Alex Coney, an optical physicist on TTP’s Autonomous Driving team. “These are absorption, scatter, and a reduction in the reflectivity of surfaces.”

Absorption, Scattering & Reflection

For an example of a change in wet reflectivity, think about sand. Wet sand is visibly darker than dry sand due to increased scattering and total internal reflection within the material. If you apply these mechanisms to model the effects of different adverse weather conditions, you can see the effective range of a LiDAR system decrease as the weather worsens.

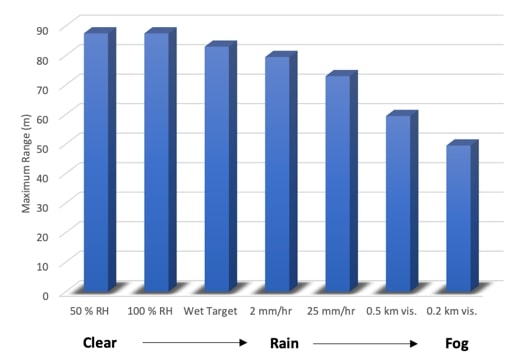

As you can see, relative humidity has little impact on the range. This is because even at 100 percent humidity, the water content in the atmosphere is pretty low when compared to rain or fog. Then imagine it starts to rain; there is now enough water in the air for absorption to play a significant role. And to make things worse, as everything gets wetter, it typically reflects less light too. As you would expect, heavier rain means more absorption means lower range. Our simulation shows heavy rain at 25 mm/hr reduces the effective LiDAR range by 15 to 20 percent.

The size of a raindrop is around 1,000 times greater than the laser wavelength, so scatter does not play a part. However, scatter becomes important in foggy conditions where the tiny droplets suspended in the air are comparable to the wavelength of the light. As you can see, fog leads to a dramatic 50 percent reduction in LiDAR range, which, just as with a human driver, means a vehicle will need to slow down for safety.

Which LiDAR System Works Best In Rain?

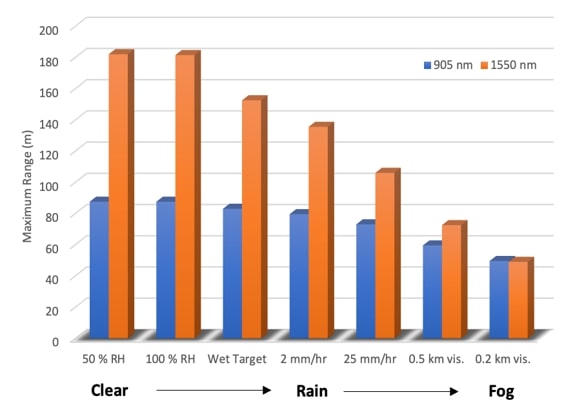

LiDAR sensors can be broadly divided into two camps depending on the wavelength of light they use. The two most common systems are 905 nm and 1550 nm, and there is plenty of debate over which is optimum. “905 nm uses lower-cost components, but its range is limited by eye safety,” Coney said. “1550 nm requires more expensive optical amplification and detectors but can be run at higher power because less of the light can reach the delicate retina of your eye.”

In ideal conditions, the increased power of a 1550 nm LiDAR system leads to a longer effective range. However, the impact of absorption, scatter, and reflection in wet conditions are all more serious at longer wavelengths. The simulation above shows that the range advantage of a more expensive 1550 nm system could be eliminated as the weather worsens.

New Technology Can Help

Would an autonomous vehicle have to slow down or stop in heavy fog and severe downpours similar to human drivers? Perhaps, but some clever techniques can be used to minimize degradation in performance.

Frequency-Modulated Continuous Wave (FMCW) is a highly sensitive form of LiDAR that can perform well in conditions where few photons are detected, which works in an entirely different way to more familiar Time-of-flight (ToF) systems. This technique uses a more complex frequency-tunable laser which produces light continuously, unlike pulsed ToF LiDARs, but the frequency (wavelength) of the light is modulated. Some of the light is projected outwards to measure, whereas some is kept within the sensor as a reference known as the local oscillator.

The return signal is mixed with the local oscillator to determine the position of objects and their velocity distance and velocity of the object that returned the pulse. Because much more light finds its way to the detector in the continuous measurement mode, this detection method has much larger signals to measure than ToF.

FMCW can handle much more environmental attenuation, increasing the range in poor conditions. This technology’s potential is huge, as evidenced by the recent valuations of FMCW LiDAR start-ups such as Silc and Aeva.

While FMCW offers many benefits, it’s not without its challenges. The tuneable lasers required by FMCW LiDARs were originally developed for optical communications, which have very different requirements to automotive sensors. Bulky lasers, which cost multiple thousands of dollars, will prevent the successful commercialization of the technology, and the ability of companies like Silc and Aeva to address challenges like these will determine whether we ever see FMCW-equipped vehicles on our roads.

Full Waveform LiDAR is another technology that could help in the worst driving conditions. Used in airborne and satellite-based LiDAR systems to map forest canopies, this technology uses not just the timing of a return pulse but all of the information contained within the pulse shape. By doing this, it is possible for a system to use the difference between the reflected pulses to differentiate between the scattered fog return and the reflected target return.

Either Way, Sensor Fusion is a Must

No sensing modality performs perfectly under all conditions, so it is essential to use multiple sensors and fuse the information they produce to ensure the vehicle always has the best possible view of the world around it. In addition to the familiar sensors of LiDAR, cameras, and radars, AV developers are considering the possibility of aircraft sensors to navigate bad weather. Start-ups such as Adasky and Brightway Vision have adapted sensors routinely used by aircraft for road vehicles, thermal cameras, and gated cameras, respectively.

“One of the most exciting prospects of autonomous vehicles is how they will not just match the performance of human drivers but surpass it,” said Tom Jellicoe, Autonomous Driving lead at TTP. “As a UK resident, I’m all too familiar with weather interrupting travel. The key to unlocking this will be using methods of perceiving a vehicle’s environment which aren’t available to human drivers.”